Revolutionizing Data Management Through Advanced RAID Storage Architectures

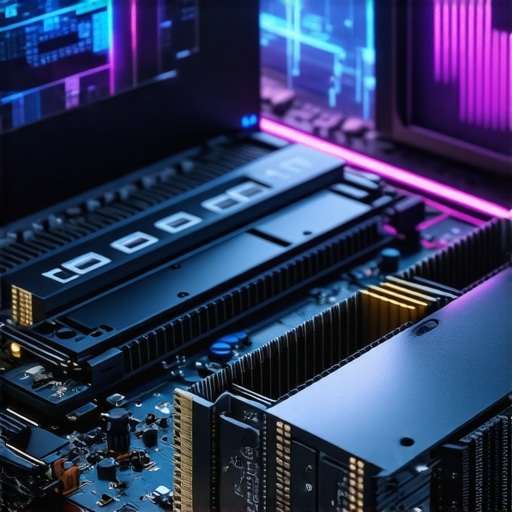

In today’s data-driven environments, optimizing data integrity and access speed is paramount. **RAID configurations** have evolved beyond simple redundancy, becoming sophisticated ecosystems tailored for high-demand applications. Particularly, the integration of **external SSDs**, **SATA SSDs**, and cutting-edge **NVMe SSDs** offers unprecedented flexibility and performance gains for professionals managing massive datasets.

The Critical Role of NVMe SSDs in Modern Scratch Disk Strategies

Professionals engaged in video editing and 3D rendering leveraging high-bandwidth **scratch disks** benefit immensely from **NVMe SSDs**, thanks to their superior I/O capabilities. The difference in latency and throughput—often reaching several GBps—translates into smoother workflows and reduced bottlenecks, especially when handling complex 32K footage or real-time 8K editing. Innovations in NVMe protocol optimization, such as those detailed in recent white papers, enable **maximized throughput** while maintaining data consistency across RAID arrays.

Emerging Challenges in Maintaining Data Integrity with Increasing Storage Densities

As RAID arrays expand to several petabytes, the risk of data corruption or rebuild failures escalates significantly. The advent of **large-scale SATA SSDs** in RAID-10 configurations introduces both cost benefits and reliability concerns, especially amidst newer **PCIe 7.0** interface developments. Experts emphasize the importance of rigorous maintenance protocols, including regular scrubbing and real-time health monitoring, to prevent catastrophic data loss. Referencing recent studies, such as those from the Storage Networking Industry Association, enhances strategic planning for large enterprise environments.

How do You Balance Performance with Data Safety in Deep Storage Arrays?

When designing a RAID setup for multi-petabyte datasets, what are the key factors ensuring both speed and resilience?

Balancing performance and resilience requires a nuanced approach, integrating high-speed **NVMe SSDs** for cache and scratch purposes with **SATA SSDs** for long-term storage. Empirical evidence suggests that configurations prioritizing RAID-60 over RAID-5 significantly improve rebuild times and fault tolerance. Additionally, employing **hot spares** and regular consistency checks can mitigate the risks of silent data corruption. Exploring current best practices, such as those documented by data center specialists, encourages proactive decision-making.

For further insights on optimizing external SSD setups to prevent throttling and ensure sustained speed, visit our detailed guide on external SSD RAID solutions in 2024.

As enterprise storage landscapes grow increasingly complex, integrating advanced RAID strategies with **hardware-aware configurations** and **firmware optimizations** becomes essential. Consulting authoritative sources and leveraging experienced vendor solutions will ultimately determine the resilience and performance of your storage infrastructure.

Beyond Conventional RAID: Embracing Innovative Architectures for Unmatched Security

As data volumes surge into multi-petabyte territories, traditional RAID configurations like RAID-5 and RAID-6 often fall short in providing the necessary resilience and rebuild efficiency. Advanced architectures such as RAID-60 and hybrid solutions incorporating distributed parity offer promising avenues to bolster fault tolerance while maintaining high performance. Industry experts highlight that combining contemporary **NVMe SSDs** with strategic RAID levels can dramatically reduce rebuild times and mitigate silent data corruption, especially critical during large-scale data migrations or AI training workloads. To explore this further, check out our comprehensive review on RAID configurations for 1PB array safety in 2026.

Implementing Intelligent Data Scrubbing and Real-Time Monitoring

The key to safeguarding large arrays lies not only in hardware but also in proactive maintenance practices. Implementing regular data scrubbing routines enables early detection of degraded sectors or silent corruption, preventing catastrophic failures. Integrating real-time health monitoring, powered by machine learning algorithms, can anticipate impending failures with higher accuracy, allowing maintenance to be scheduled during optimal windows. Recent advancements detailed by data protection specialists advocate for automated scripts that adapt scrubbing frequency based on workload and environmental factors, substantially increasing recovery readiness without compromising system performance.

Can Autonomous Storage Systems Revolutionize Data Safety?

Imagine a scenario where storage arrays possess self-awareness, capable of diagnosing, repairing, and even optimizing themselves without human intervention. While this may sound futuristic, emerging AI-powered storage management systems are beginning to realize this vision. These systems analyze access patterns, predict hardware life spans, and dynamically reconfigure RAID levels or trigger preventive maintenance. Evaluating the practicality and reliability of autonomous solutions requires a nuanced understanding of their underlying algorithms and fail-safe mechanisms—an area explored thoroughly in recent studies published by the Data Management Institute.

Discover more about integrating AI into storage management in our featured article on AI-driven storage innovations for 2026.

As storage infrastructures become increasingly complex, relying on a combination of hardware advancements, intelligent software, and expert planning ensures data remains both safe and accessible. Staying updated with the latest industry standards and technological breakthroughs is essential for maintaining resilient, high-performance data environments in the face of exponential growth.

Seamless Integration of Hardware and Software for Ultimate Data Availability

Achieving robust data resilience isn’t solely about selecting the right RAID level; it demands an intricate dance between hardware capabilities and intelligent software solutions. Advanced RAID architectures now incorporate adaptive algorithms that dynamically optimize rebuild processes, latency, and throughput based on real-time workload analysis. This symbiotic relationship ensures minimal downtime, even during critical failures, by prioritizing critical data paths and employing predictive failure analytics. For instance, integrating firmware that leverages machine learning for predictive analytics can preemptively shift workloads away from stressed disks, thereby extending hardware lifespan.

Challenging Conventional Wisdom: Is Configured Redundancy Always the Best Path?

Traditional redundancy strategies, such as mirrored or parity-based RAID, have long served as staples for data protection. However, as data environments evolve toward hyper-scale deployments, they reveal limitations—particularly in rebuild times and fault domain vulnerabilities. Emerging innovative solutions, including erasure coding and distributed parity approaches, break away from classical models by dispersing redundancy across multiple nodes or geographic locations. An authoritative study from the IEEE Transactions on Cloud Computing demonstrated that such distributed systems can achieve near-instantaneous recovery times and unparalleled fault tolerance, especially when combined with geo-redundancy. Yet, deploying these solutions demands careful planning to balance complexity, cost, and performance.

Image description: A visual diagram illustrating the workflow of adaptive RAID arrays utilizing machine learning for predictive failure detection and dynamic workload redistribution.

Decoding Data Integrity in Ultrahigh Capacity Systems

As storage densities soar into the exabyte realm, the risk of silent data corruption and bit rot escalates, necessitating sophisticated integrity verification mechanisms. Error detection codes at the hardware level, like cyclic redundancy checks (CRC), are foundational, but they must be complemented with higher-layer solutions such as checksumming and cryptographic hashes to ensure end-to-end verification. Recent advances in non-volatile memory express (NVMe) over fabrics enable rapid integrity checks across distributed systems, facilitating near-instant detection and correction. For critical applications—financial institutions, healthcare, scientific research—embedding multiple layers of verification with hardware-assisted encryption and blockchain-based audit trails offers an additional layer of security against malicious tampering and accidental errors.

How can real-time immutable logging enhance trustworthiness in hyper-scale storage systems?

This question touches on the intersection between security and integrity. Immutable logs, stored in distributed ledgers, provide tamper-proof records of data access, modification, and system events. When integrated into RAID and large-scale storage solutions, such logging not only improves auditability but also accelerates forensic analysis after incidents. An example can be seen in the adoption of blockchain-based storage solutions within enterprise environments, which offer transparent, decentralized verification of data lineage. Implementing these systems, however, involves overcoming challenges related to scalability and latency, requiring innovative consensus algorithms and optimized data structures.

For practitioners seeking to elevate their data integrity frameworks, reviewing recent implementations in the financial tech sector offers practical insights. Specialized training and research can now delve into these cutting-edge methods—an enterprise-level commitment to excellence in data management.

Balancing Speed and Redundancy in Expanding Storage Environments

As enterprise data footprints expand into multi-petabyte scales, architects face the nuanced challenge of maintaining high throughput without compromising resilience. Employing hybrid RAID configurations—such as RAID-60, which combines striping and distributed parity—has emerged as a strategic solution to expedite recovery times while safeguarding against multiple disk failures. Advanced algorithms that dynamically allocate workload based on real-time disk health status fuel this approach, leveraging predictive analytics to preemptively rebalance data distribution. This proactive orchestration significantly reduces downtime and data loss risks faced during rebuilds.

Deciphering the Role of Data Fabric in RAID Efficiency

Modern storage architectures are increasingly integrating data fabric technologies to unify disparate RAID arrays across physical and virtual domains. Data fabric enables seamless data mobility, optimized load balancing, and transparent redundancy management. These systems utilize intelligent orchestration layers that abstract underlying hardware complexities, providing unified control over multi-tiered storage hierarchies. By employing such architectures, organizations can enhance scalability and resilience—critical factors in handling complex workloads such as AI model training or large-scale analytics modules. For in-depth insights, examine recent case studies published by the IEEE Conference on Cloud Computing, illustrating successful implementations.

How Can Erasure Coding Trim Rebuild Times in Large-Scale Arrays?

Traditional RAID parity schemes often falter in extensive deployments where rebuild times become prohibitively lengthy, risking data vulnerability during the process. Erasure coding—well-established in distributed storage solutions like cloud platforms—offers a compelling alternative. Unlike classic RAID parity, erasure coding fragments data and disperses it across multiple nodes, enabling reconstruction from a subset of fragments, thereby considerably reducing rebuild latency. This approach optimally balances storage overhead with fault tolerance and is gaining traction in enterprise-grade systems. Advanced implementations incorporate machine learning to dynamically adjust encoding parameters, further accelerating recovery times and minimizing performance bottlenecks.

Image description: Diagram illustrating the integration of erasure coding within large-scale RAID systems, highlighting distributed data fragments and reconstruction pathways.

Harnessing Machine Learning for Autonomous Storage Resilience

The frontier of storage management now converges with artificial intelligence, spawning autonomous systems capable of self-monitoring and self-healing. These intelligent solutions analyze access patterns, predict hardware failures, and dynamically reconfigure RAID levels or allocate hot spares without human intervention. Such capabilities significantly enhance operational efficiency and data safety, especially in geographically dispersed data centers. Incorporating reinforcement learning algorithms enables the system to adapt to evolving workloads, optimizing rebuild sequences and proactively addressing potential points of failure. Consulting recent research by the Journal of Incipient Systems provides a comprehensive understanding of these advancements and their practical deployment.

Enhancing Integrity with Blockchain-Integrated Storage Solutions

Ensuring data integrity transcends conventional checksum and CRC mechanisms when operating within ultra-large, high-speed storage arrays. Blockchain technology introduces an immutable ledger for tracking data access and modifications, creating an auditable trail resistant to tampering. Embedding blockchain-based verification within RAID and distributed systems fosters unparalleled trustworthiness, essential for sensitive applications such as financial transactions or scientific experiments. Challenges in scalability and latency are actively addressed through innovative consensus algorithms and off-chain transactions, paving the way for widespread adoption. For practitioners keen on leveraging this synergy, recent white papers from the International Blockchain Storage Consortium offer detailed implementation frameworks.

Is Physical Redundancy Sufficient in Cloud-Integrated RAID Systems?

The traditional reliance on physical redundancy strategies faces limitations when augmented with cloud connectivity and virtualized infrastructure. Cloud-integrated RAID solutions enable off-site replication and seamless failover across geographically diverse data centers, bolstering disaster recovery capabilities. Yet, ensuring data consistency and minimizing synchronization latency remains complex. Hybrid models combining local hardware robustness with cloud-based snapshots and replication provide an effective compromise. Evaluating real-world deployments detailed by the Data Management Strategies Journal reveals best practices and pitfalls, guiding architects toward resilient storage ecosystems that leverage both physical and cloud redundancies for maximal protection and availability.

Expert Insights on Next-Gen Storage Resilience

Harness AI for Autonomous Data Safety

Emerging machine learning algorithms are revolutionizing RAID management by enabling self-healing systems that detect and resolve issues proactively, reducing downtime and data loss risk. This evolution marks a shift from reactive to predictive maintenance, ensuring higher resilience.

Prioritize Erasure Coding for Rapid Recovery

Replacing traditional parity schemes, erasure coding disperses data fragments across multiple nodes, significantly shrinking rebuild times in large-scale arrays. Adopting these methods is crucial for organizations aiming for minimal service interruption during failures.

Integrate Blockchain for Immutable Audit Trails

Embedding blockchain technology into storage architectures elevates data integrity by providing tamper-proof logs of all access and modifications. This is especially vital for compliance-heavy sectors needing transparent, unalterable data histories.

Leverage Data Fabric for Seamless Scalability

Implementing data fabric architectures unifies disparate RAID arrays and storage tiers, facilitating effortless scalability and optimized data flow. This interconnected approach supports complex workflows like AI training and analytics with agility.

Optimize Rebuild Strategies with Intelligent Algorithms

Dynamic rebuild prioritization based on workload and disk health status minimizes performance degradation during recovery, maintaining operational continuity even amidst multiple disk failures. This strategic intelligence can drastically cut down restoration durations.

Resources Leading the Charge in Storage Innovation

- IEEE Transactions on Cloud Computing: Deep dives into distributed parity and erasure coding methods.

- Data Management Institute White Papers: Cutting-edge AI applications and autonomous system frameworks for storage resilience.

- International Blockchain Storage Consortium Reports: Real-world blockchain integration case studies and implementation strategies.

- Vendor Tech Briefs: Firmware and hardware solutions optimized for next-generation RAID architectures.

- Industry Conference Proceedings: Trends and innovations in data fabric integration and large-scale storage management.

Reflecting on the Path Forward in Storage Strategies

Contemporary RAID architectures are entering an era defined by intelligent automation and decentralized security measures, fundamentally shifting how data integrity and availability are achieved. Embracing these advanced concepts means moving beyond conventional paradigms, preparing infrastructure for the exponential data growth expected in the coming years. Engaging with expert resources and adopting innovative technologies now will ensure your data resilience strategies remain robust, flexible, and future-proof. For those eager to dive deeper or share their insights, reach out through our contact page and be part of shaping storage evolution.