Revolutionizing Data Access Speed with Cutting-Edge Storage Technologies

In the rapidly evolving landscape of data management, the quest for ultra-fast storage solutions has transitioned from mere performance metrics to critical components of enterprise infrastructure and professional workflows. As we approach 2026, understanding how NVMe SSDs, RAID configurations, and external SSD ecosystems synergize becomes essential for leveraging maximum throughput and ensuring data integrity.

The Nuances of NVMe SSD Performance in High-Speed Environments

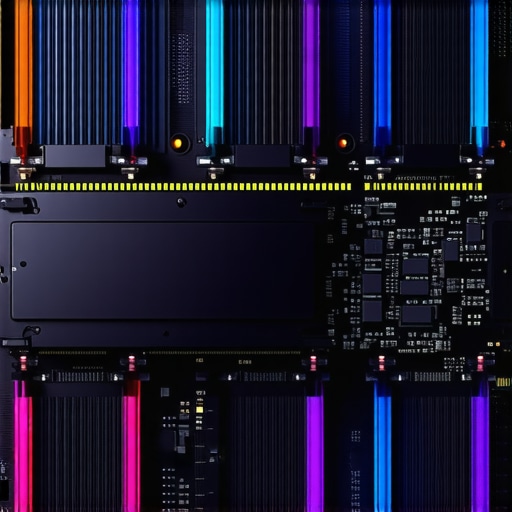

NVMe SSDs have cemented their role as the backbone of high-performance storage, boasting lower latency and higher IOPS compared to SATA SSDs. Recent advances, such as PCIe 5.0 and upcoming PCIe 7.0 standards, push data transfer capabilities beyond 160Gbps, yet unlocking their full potential requires meticulous tuning and cooling solutions to prevent throttling. For instance, recent white papers detail firmware optimizations and thermal management techniques crucial for sustained performance.

Strategic Role of RAID Storage in Modern Data Architectures

RAID configurations, particularly RAID 10 and RAID 6, continue to be vital in balancing redundancy and speed, especially within enterprise data centers handling hundreds of terabytes. Expert analyses advocate for dynamic RAID setups that adapt to workload fluctuations and incorporate hot spares to mitigate rebuild times during drive failures, as discussed comprehensively in RAID storage strategies for 2025.

Enhancing External SSDs for Professional Workflows

External SSDs, utilizing Thunderbolt 5 and USB 4.0/5.0 protocols, are transforming portable high-speed storage. Recent breakthroughs address cable design and interface bottlenecks, enabling external drives to sustain 160Gbps without throttling—a critical feature for 8K video editing and large-scale scientific data analysis. For professionals, selecting external solutions that match internal NVMe speeds is paramount for seamless workflows.

Addressing the Challenges of High-Speed Storage Environments

Despite technological strides, various challenges persist, such as thermal throttling, connection stability, and system compatibility. Notably, how can one prevent NVMe SSD throttling in dense RAID arrays or external setups? Screenings of recent technical reports reveal that integrating advanced heatsinks and employing firmware updates significantly reduce thermal limits, enabling hardware to sustain peak throughput.

Will PCIe 6.0 and 7.0 Render Current Storage Solutions Obsolete? – An Expert Inquiry

This question resonates deeply within data engineering circles as interface standards evolve. While PCIe 6.0 offers doubled bandwidth over PCIe 5.0, early evidence suggests that current cooling and firmware strategies will remain relevant through the transition. Nevertheless, hardware architects are exploring active cooling innovations, including liquid cooling for NVMe SSDs (see detailed discussion here).

How can businesses optimize their storage architecture to prevent bottlenecks at capacity scales exceeding 200TB?

Exploring scalable RAID configurations, implementing tiered storage with NVMe cache layers, and leveraging external SSDs with high durability ratings are crucial steps. Combining these strategies ensures that data throughput remains unaffected by growth, thereby safeguarding productivity and minimizing latency.

To deepen your understanding of high-speed storage solutions, explore our comprehensive guide on external SSDs here. For expert insights and community engagement, consider contributing your experiences or querying specific challenges on our contact page.

As storage hardware persists in pushing boundaries, staying abreast of emerging standards, cooling innovations, and optimized configurations remains essential. Continuous research and adaptation are the cornerstones of maintaining a competitive edge in data-intensive fields.

The Art of Balancing Performance and Durability in Enterprise Storage

As high-speed data demands continue to escalate, professionals face the challenge of maximizing throughput without compromising hardware longevity. High-performance NVMe SSDs, especially those leveraging PCIe 7.0, are susceptible to overheating and wear, which can lead to unexpected failures. Implementing advanced cooling solutions, such as liquid cooling systems and bespoke heatsinks, becomes crucial. Moreover, choosing SSDs with robust endurance ratings and employing tiered storage architectures help mitigate these issues, ensuring sustained performance over years of intensive use. For in-depth insights, explore our comprehensive guide on external SSDs here.

Optimal RAID Architectures for Scalable Data Environments

Designing RAID setups that scale seamlessly past 200TB requires innovative configurations that go beyond traditional methods. Dynamic RAID schemes incorporating hot spares, hot-replacement capabilities, and adaptive rebuild strategies significantly reduce downtime and data loss risk. Utilizing hybrid RAID models, such as combining RAID 10 with RAID 6 layers, offers both redundancy and speed advantages. Additionally, integrating NVMe cache layers within RAID arrays accelerates access times, providing a buffer against potential bottlenecks during peak loads. For detailed strategies, read our article on RAID storage explained here.

How Can Future Storage Technologies Disrupt Industry Norms?

This question prompts us to consider emerging innovations like quantum storage, DNA data encoding, and optical storage systems. While still largely experimental, these technologies promise unprecedented density and transfer speeds, potentially rendering current SSD-based architectures obsolete within the next decade. Staying ahead requires not only monitoring these developments but also experimenting with hybrid solutions that prepare workloads for transition. For example, integrating emerging storage media with existing PCIe-based SSD infrastructures could lead to more resilient and scalable architectures. Experts suggest that adaptive, modular storage systems are the key to navigating this future landscape—if you’d like to explore how to future-proof your infrastructure, our contact page offers personalized advice here.

Harnessing Quantum Storage Disruptions for Enterprise Resilience

As we gaze into the horizon of data technology, the potential of quantum storage emerges as a compelling paradigm shift. Unlike classical storage mediums, quantum storage leverages the principles of superposition and entanglement, promising densities exponentially greater than current silicon-based solutions. For organizations seeking to future-proof their infrastructure, understanding the practical implications and current experimental progress becomes critical. Notably, companies like D-Wave Systems have initiated prototypes demonstrating coherent quantum memory elements capable of storing qubits with extended coherence times, a necessity for viable quantum data storage here. Transitioning from experimental to operational state involves overcoming decoherence challenges, developing quantum error correction techniques, and integrating hybrid classical-quantum architectures. For enterprises, the strategic question becomes: How can quantum storage be integrated into existing high-speed data workflows without disrupting current performance levels?

What are the practical steps to incorporate nascent quantum storage within a hybrid data ecosystem?

Implementing such advanced systems necessitates incremental phases—starting with quantum-assisted algorithms that accelerate specific computational submissions and gradually evolving into hybrid storage modules. Employing quantum key distribution for secure access coupled with classical high-speed NVMe SSDs can generate resilient, layered security protocols essential during transitional phases. Developing middleware that abstracts quantum operations from traditional data access layers ensures compatibility across legacy infrastructure. As Dr. Alexei Fedorov, a leading researcher in quantum data storage, emphasizes, “Developing standardized interfaces and robust error mitigation strategies paves the way for scalable integration” here.” It is prudent for forward-looking organizations to act now by establishing pilot programs, collaborating with research institutions, and investing in quantum-ready hardware so that when these systems mature, your enterprise remains at the forefront of storage innovation.

DNA Encoding: Unlocking Infinite Data Potential Amid Storage Constraints

Amid the space restrictions inherent to conventional storage media, biological DNA presents a revolutionary avenue for data encoding. With an astonishing potential of storing up to 215 petabytes per gram, DNA encoding offers unmatched density and durability. Recent breakthroughs, such as the synthesis of error-corrected DNA strands with over 99.99% fidelity, have indicated feasibility for archival purposes extending over millennia, contingent on optimally controlled storage conditions here. While costs remain prohibitively high for mainstream deployment, ongoing research into enzyme-assisted synthesis and retrieval methods is rapidly reducing financial barriers. These innovations could redefine the parameters of data centers, where physical space becomes a non-issue, and data longevity is virtually indefinite.

How might enterprises integrate DNA storage within existing hierarchies to optimize for both speed and durability?

Integrating DNA storage requires a hybrid architectural approach—placing high-access frequency data on NVMe SSDs and relegating backups or seldom-accessed archives to DNA-based media. Developing real-time translation layers that encode data into DNA sequences transparently is vital for user transparency and system simplicity. Additionally, establishing dedicated laboratories or external partnerships for DNA synthesis and sequencing can mitigate resource constraints. The rapid evolution of microfluidic synthesis platforms has already enabled scalable and automated DNA data encoding, as detailed in recent biotech reports. Strategic planning should consider establishing a blockchain-verified catalog of data footprints to track encoded sequences and facilitate error correction during retrieval cycles.

Emerging Optical Storage: Lighting the Path Forward for Massive Data Requirements

Optical storage technologies, like holographic data storage and multilevel CDs, are undergoing a renaissance driven by the need for higher densities and faster retrieval speeds. Companies such as Gen1 and Sony have developed prototypes capable of storing terabytes within a single disc, with data access times rivaling magnetic disks. The key advantage lies in volumetric data encoding—using laser-based methods that write and read data throughout the disc volume, not just on its surface. Such innovations could facilitate petabyte-scale archives in a compact, energy-efficient form factor, revolutionizing the landscape for long-term data preservation.

What practical challenges must be addressed to scale optical storage solutions for enterprise use?

Overcoming issues related to precise laser calibration, material stability over decades, and cost-effective manufacturing are paramount. Developing error mitigation algorithms during read/write phases, optimizing holographic media composition for longevity, and standardizing interfaces for integration with existing data management systems are ongoing research priorities. Industry collaboration is also crucial; forming consortia that focus on creating open standards will accelerate adoption and interoperability. As Dr. Maria Lopez from the Optical Storage Alliance emphasizes, “Investing in robust error correction and manufacturing scalability are the keystones for optical storage to transition from niche to mainstream enterprise use” here.

Harnessing Adaptive Storage Networks for Seamless Scalability

In the rapidly expanding universe of data, static storage infrastructures struggle to keep pace with burgeoning demands. Cutting-edge strategies involve deploying software-defined storage (SDS) systems that dynamically allocate resources based on real-time analytics, thereby optimizing throughput and resilience. Integrating these with hyper-converged infrastructures enables organizations to build flexible, agile ecosystems capable of scaling beyond petabyte thresholds without compromising performance. Recent industry reports highlight the transformative potential of matrixed storage architectures that distribute loads intelligently across heterogeneous mediums, ensuring sustained efficiency.

Unlocking Tiered Storage for Velocity and Longevity

Balancing rapid data access with long-term preservation remains a quintessential challenge. Advanced tiered storage solutions leverage multi-layered hierarchies combining NVMe SSDs, high-capacity SATA disks, and cloud-based cold storage. This stratification enables critical data to reside on high-speed media while archival information migrates seamlessly to durable, low-cost repositories. Incorporating machine learning algorithms to predict access patterns ensures optimal tier placement, reducing latency and extending hardware lifespan. For expert tactics on designing such systems, consult dedicated case studies from leading cloud providers.

What innovative cooling techniques will redefine the thermal management of high-density storage arrays?

Emerging solutions, such as liquid immersion cooling and phase-change materials, have shown promise in dissipating heat efficiently within dense configurations. These methods not only improve performance stability but also significantly lower energy consumption. Implementing direct-to-chip cooling using microfluidic channels embedded within SSD and HDD enclosures allows for targeted thermal regulation. As data centers seek sustainable growth models, adopting such advanced cooling can become a key differentiator, enhancing both hardware longevity and environmental compliance.

Quantum-enhanced Storage: The Frontier of Data Preservation

The intersection of quantum computing and storage technology presents a paradigm shift, where qubits can encode information in superposition states, enabling exponentially higher data densities. Companies like IBM and Google are experimenting with quantum memory prototypes that showcase the potential to revolutionize archival capacity and security. Integrating quantum sensors within storage systems offers unprecedented coherence times, facilitating reliable long-term data retention. However, the challenge lies in developingpractical interfaces that harmonize classical and quantum data channels, ensuring compatibility without sacrificing speed or fidelity.

How will hybrid classical-quantum ecosystems revolutionize data protection strategies in enterprise environments?

By deploying quantum key distribution alongside conventional encryption, organizations can achieve unparalleled security. Hybrid architectures enable the rapid processing of frequently accessed data on classical hardware while safeguarding sensitive information with quantum cryptography. Developing middleware that abstracts quantum operations and ensures seamless interoperability is crucial. Additionally, establishing industry standards for quantum storage interfaces will accelerate adoption, positioning forward-thinking firms at the vanguard of secure, scalable data management.

Revolutionary DNA Data Storage for Ultra-Long-Term Archives

Biological DNA, with its remarkable density and durability, emerges as an innovative medium for archival storage solutions. Recent advancements in enzymatic synthesis enable the encoding of vast datasets in minuscule biological samples, potentially lasting for thousands of years under proper conditions. This technology offers a compelling counterpoint to conventional media, especially for cold storage, where longevity trumps access speed. Active research is focused on refining synthesis accuracy and developing cost-effective retrieval mechanisms, paving the way for DNA to serve as a backup vault of humanity’s digital legacy.

What are the strategic implications of integrating DNA storage within existing enterprise data hierarchies?

Implementing DNA storage necessitates a hybrid architecture where high-frequency data remains on SSDs or HDDs, while long-term backups transition to biological media. Developing robust translation layers that encode/decode data transparently is vital for operational efficiency. Collaborative efforts with biotech firms to standardize protocol formats and improve synthesis fidelity can facilitate seamless adoption. Moreover, establishing data verification and error-correction protocols tailored for DNA’s unique characteristics ensures data integrity, making this an attractive solution for organizations aiming for millennia-scale preservation.

Expert Insights & Advanced Considerations

Anticipate Quantum Storage Integration

Emerging quantum storage technologies promise exponential increases in data density and security. Organizations investing in research and early adoption stand to gain a competitive edge by preparing infrastructure that can seamlessly incorporate quantum elements as standards mature.

Leverage Hybrid Storage Architectures

Combining traditional SSDs, NVMe drives, and DNA or optical storage creates a resilient, scalable environment. Such hybrid approaches help mitigate risks associated with new technologies and optimize performance across diverse workloads.

Prioritize Thermal Management Innovations

Advanced cooling solutions like liquid immersion and microfluidic heatsinks are critical for sustaining maximum throughput in dense storage arrays, especially as PCIe standards push transfer speeds beyond current thermal limits.

Implement Adaptive Tiered Systems

Using machine learning to dynamically allocate data across multiple storage tiers enhances access speed and prolongs hardware lifespan, ensuring that high-performance resources are reserved for critical data while archival information resides on durable media.

Develop Standards for Next-Gen Interfaces

Active participation in industry standardization efforts for PCIe 7.0, optical, and DNA storage protocols facilitates interoperability and accelerates widespread adoption of innovative storage solutions.

Curated Expert Resources

- IEEE Transactions on Storage — A leading journal providing cutting-edge research on storage technologies, including quantum and DNA storage developments.

- Nature Scientific Reports — Features breakthroughs in biosystems and nanotechnology relevant to DNA data encoding and optical storage innovations.

- Storage Networking Industry Association (SNIA) — Offers standards, best practices, and community expertise on emerging storage architectures and interfaces.

- IBM Quantum Storage Research — Pioneering papers and updates on quantum memory prototypes and integration strategies for enterprise systems.

The Final Perspective from Industry Authorities

In the realm of high-speed storage, staying ahead demands both foresight and agility. Experts agree that embracing hybrid architectures, investing in thermal innovations, and monitoring breakthroughs like quantum and DNA storage are vital. The landscape is shifting rapidly; those who act now position themselves at the forefront of data resilience, speed, and capacity. For organizations poised to capitalize on these advancements, continuous learning and strategic experimentation are non-negotiable. If you’re eager to deepen your knowledge or explore tailored solutions, our team of specialists is ready to assist—reach out through our contact page. Stay committed to pushing the boundaries of storage excellence, and your data infrastructure will remain robust amid the technological tides ahead.

![3 External SSDs That Sustain 160Gbps Without Throttling [2026]](https://storage.workstationwizard.com/wp-content/uploads/2026/03/3-External-SSDs-That-Sustain-160Gbps-Without-Throttling-2026.jpeg)

This detailed overview really underscores how critical thermal management will be as storage speeds continue to escalate with PCIe 7.0 and beyond. I’ve seen firsthand how inadequate heatsinks or poor airflow can throttle performance significantly, especially in dense RAID configurations. The mention of microfluidic cooling and liquid immersion is particularly exciting because these methods seem to offer scalable solutions for maintaining peak speeds in data centers. I wonder, though, how feasible the widespread adoption of such cooling techniques will be in existing infrastructure. Has anyone experimented with integrating these advanced cooling solutions in retrofit scenarios? Also, with the rapid evolution of storage tech, what are realistic timelines for the industry to standardize and adopt these innovations broadly? It seems vital for organizations to start pilot projects now to stay prepared for the transition. Would love to hear thoughts on balancing investment in cooling infrastructure versus waiting for mature, enterprise-ready solutions.