Unveiling the Power of RAID Storage: The Backbone of Modern Data Centers

In an era where data is the new oil, understanding how RAID storage systems can enhance data reliability and speed is crucial for IT professionals and tech enthusiasts alike. RAID, or Redundant Array of Independent Disks, is not just a buzzword but a transformative technology that ensures your data remains accessible and protected, even in the face of hardware failures. As we approach 2025, the advancements in RAID configurations continue to redefine the standards of data storage solutions.

Decoding RAID: More Than Just Redundancy

What Exactly Is RAID, and How Does It Work?

RAID combines multiple physical disk drives into a single logical unit to improve performance, redundancy, or both. Depending on the configuration—such as RAID 0, RAID 1, RAID 5, or RAID 10—users can achieve different balances of speed and data protection. For example, RAID 0 stripes data across disks for maximum speed but offers no redundancy, while RAID 1 mirrors data for high fault tolerance. Expertly configured RAID arrays are the backbone of enterprise data centers, cloud storage providers, and high-performance workstations.

The Evolution of RAID: From Simplicity to Sophistication

What Are the Latest Trends in RAID Technology for 2025?

Recent innovations have introduced hybrid RAID levels, software-defined RAID, and integration with NVMe SSDs to push the boundaries of speed and reliability. These advancements enable faster data access, lower latency, and improved fault tolerance. For instance, combining RAID 10 with NVMe SSDs can unlock unprecedented performance levels suitable for AI workloads and big data analytics. According to research from Gartner, the adoption of intelligent RAID configurations is set to grow exponentially in 2025, driven by the need for resilient and scalable storage architectures.

Optimizing Data Speed and Reliability: Practical Strategies

Implementing RAID effectively requires understanding your specific data needs. For mission-critical applications, RAID 6 or RAID 60 might be preferable due to their enhanced fault tolerance. Conversely, for high-speed data processing, RAID 0 or RAID 10 might be more appropriate. Additionally, regular monitoring and maintenance of RAID arrays are vital to prevent silent data corruption and ensure optimal performance. For insights into maximizing SSD speed, explore our guide on NVMe SSD Performance Secrets.

Could RAID Storage Be Your Best Bet for Future-Proofing Data Infrastructure?

In an age where data loss can cost millions and downtime is unacceptable, RAID storage offers a compelling combination of speed and resilience. Its ability to adapt to evolving tech landscapes—like integration with cloud solutions and AI-driven management—makes it indispensable for future-proofing your data infrastructure. For a comprehensive comparison of storage options, see our article on SATA SSD vs NVMe SSD.

Interested in enhancing your creative workflow? Discover how scratch disk optimization can complement your RAID setup for even faster performance.

Want to delve deeper into the technical intricacies of RAID? Check out the detailed explanations provided by Seagate’s expert resources on RAID technology.

Beyond Traditional RAID: The Rise of Software-Defined Storage Solutions

While hardware-based RAID configurations have dominated enterprise storage for decades, the emergence of software-defined storage (SDS) is reshaping the landscape. SDS abstracts storage resources from hardware, enabling flexible, scalable, and cost-effective solutions that can dynamically adapt to workload demands. This approach allows organizations to implement complex RAID-like features using commodity hardware and advanced algorithms, often with better fault tolerance and performance management. Integrating SDS with NVMe SSDs, for example, can significantly reduce latency and increase throughput, offering a compelling alternative to traditional RAID configurations. For more insights into optimizing storage architectures, explore NVMe SSD Performance Secrets.

How Do You Balance Performance and Data Integrity in High-Stakes Environments?

Achieving the perfect balance between speed and reliability is a nuanced challenge faced by data center architects and enterprise IT managers. High-performance applications such as financial trading platforms or real-time analytics demand rapid data access, often favoring RAID 0 or RAID 10. However, these configurations can expose data to risks if disks fail. Implementing hot spares, regular health monitoring, and predictive analytics can mitigate such vulnerabilities, ensuring data integrity without sacrificing speed. Moreover, leveraging advanced error correction techniques and integrating RAID with backup solutions ensures comprehensive protection. To deepen your understanding of storage reliability, check out SATA SSD vs NVMe SSD and learn how to optimize your setup for mission-critical applications.

Could Emerging Technologies Redefine Our Approach to Data Redundancy?

Emerging technologies such as persistent memory and NVMe over Fabrics (NVMe-oF) are poised to revolutionize how we think about data redundancy and speed. Persistent memory combines the speed of RAM with the persistence of storage, enabling near-instantaneous data recovery even in failure scenarios. NVMe-oF extends the reach of high-speed NVMe SSDs over network fabrics, facilitating distributed storage architectures that can replace traditional RAID arrays altogether. These innovations offer not just incremental improvements but paradigm shifts in data resilience strategies. As these technologies mature, they will likely become integral components of hybrid storage solutions that blend traditional RAID, software-defined storage, and cutting-edge hardware. For creative professionals or businesses seeking to accelerate workflows, consider our guide on scratch disk optimization.

What Are the Practical Implications of Integrating RAID with Cloud Storage?

Integrating RAID configurations with cloud storage solutions can provide a hybrid approach that enhances flexibility, scalability, and disaster recovery. Cloud providers often implement their own versions of redundancy, but combining local RAID arrays with cloud backups offers a layered defense against data loss. This setup enables real-time synchronization, off-site backups, and rapid recovery capabilities. Additionally, cloud-integrated RAID systems can dynamically allocate resources based on workload, optimizing performance and cost-efficiency. As more organizations adopt hybrid cloud strategies, understanding the interplay between on-premises RAID and cloud storage becomes paramount. For an in-depth comparison of storage architectures, revisit SATA SSD vs NVMe SSD.

Advanced RAID Configurations: Customizing Redundancy and Performance for Specialized Workloads

As data demands grow exponentially, traditional RAID levels sometimes fall short of providing the tailored solutions required by high-end enterprise environments. Enter advanced RAID configurations such as RAID 50 and RAID 60, which combine the benefits of striping, mirroring, and parity to deliver both high performance and fault tolerance, even in the face of multiple disk failures. These hybrid levels are particularly valuable in environments like video editing farms, scientific computing, and financial data processing, where downtime can translate into significant financial losses. Implementing these configurations demands a nuanced understanding of disk interdependencies, rebuild times, and optimal disk utilization strategies, often leveraging hardware accelerators and cache-intensive controllers to mitigate potential bottlenecks.

How can businesses optimize RAID configurations for mixed workloads with differing latency and throughput requirements?

Optimizing RAID for diverse workloads involves a strategic blend of hardware selection, configuration tuning, and ongoing management. For latency-sensitive applications such as real-time analytics, deploying NVMe-based RAID arrays with low-latency controllers ensures rapid data access. Conversely, bulk storage for archival purposes might leverage higher-capacity SATA drives with RAID 6 or RAID 60 for durability. Layered caching mechanisms, such as SSD caches in hybrid arrays, can bridge the gap, accelerating read/write operations without compromising redundancy. Additionally, integrating software-defined storage solutions enables dynamic reconfiguration, adapting to workload shifts in real-time, thus ensuring both performance and resilience are maintained at optimal levels.

The Interplay of RAID and Emerging Storage Technologies: A Deep Dive into Hybrid Architectures

Emerging storage paradigms are increasingly integrating with traditional RAID to craft hybrid architectures that leverage the strengths of multiple technologies. Persistent memory modules, such as Intel Optane, are now being paired with RAID arrays to create tiered storage solutions that combine ultra-fast access with large capacity. This synergy reduces latency bottlenecks and enhances overall throughput, especially for transactional systems. Similarly, NVMe over Fabrics (NVMe-oF) facilitates distributed RAID-like configurations across networked storage nodes, enabling scalable, fault-tolerant architectures that transcend physical limitations of local disks. These hybrid solutions demand sophisticated orchestration layers, often managed via software-defined storage platforms, which intelligently allocate data across tiers based on access patterns, workload criticality, and cost considerations. Such innovations are setting the stage for next-generation data centers where flexibility, speed, and resilience are seamlessly integrated.

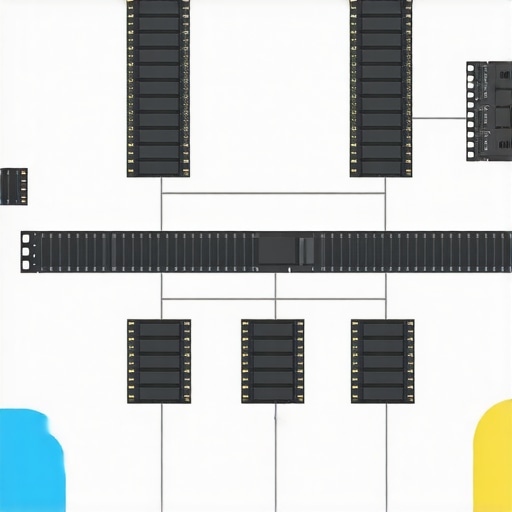

Illustration of hybrid storage architecture integrating NVMe SSDs, persistent memory, and traditional RAID arrays for optimized performance and resilience.

Optimizing RAID for Cloud-Integrated Storage Ecosystems: Strategies for Hybrid Environments

As organizations increasingly adopt hybrid cloud models, the challenge becomes harmonizing on-premises RAID configurations with cloud-based storage solutions. Effective strategies include implementing cloud-aware RAID architectures, where local arrays are synchronized with off-site backups via automated replication tools. This approach ensures data consistency, rapid recovery, and minimal downtime in disaster scenarios. Moreover, leveraging cloud-native storage services with integrated redundancy complements on-premises RAID, providing a layered defense against data loss. Advanced orchestration tools enable dynamic scaling of storage pools, allowing seamless expansion or reconfiguration without service interruption. For enterprise-grade solutions, understanding the nuances of latency, bandwidth, and security considerations is critical when designing such hybrid environments—an area ripe for further exploration in our upcoming detailed guide.

Revolutionizing Data Redundancy with Parity Algorithms: Beyond Basic RAID

Advanced parity algorithms, such as Reed-Solomon and XOR-based coding, are increasingly integrated into modern RAID implementations to enhance error correction capabilities while minimizing overhead. These sophisticated algorithms enable the reconstruction of data from multiple disk failures, ensuring higher fault tolerance without sacrificing performance. Implementing these techniques requires a nuanced understanding of finite field mathematics and error-correcting codes, often through hardware accelerators or optimized software layers. As data integrity becomes paramount in high-stakes environments like financial services and scientific research, leveraging these parity algorithms offers a strategic edge in designing resilient storage architectures.

How Can Machine Learning Optimize RAID Array Management and Predict Failures?

Machine learning algorithms are transforming RAID management by enabling predictive analytics for disk health monitoring. By analyzing SMART attributes, temperature logs, and performance metrics, these models can forecast imminent disk failures, allowing preemptive replacements and reducing unplanned downtime. Implementing such systems involves training models on extensive historical data, integrating them with storage management software, and establishing automated response protocols. This proactive approach not only enhances reliability but also optimizes maintenance schedules and extends the lifespan of storage arrays. For organizations seeking to elevate their data resilience strategies, embracing AI-driven diagnostics is an essential step toward intelligent storage management.

What Are the Emerging Paradigms in Software-Defined Storage Architectures?

Software-defined storage (SDS) is rapidly evolving, offering unprecedented flexibility and scalability by decoupling storage management from hardware constraints. Modern SDS platforms leverage distributed object storage, containerization, and virtualization to create adaptable, multi-tenant environments capable of implementing complex RAID-like features dynamically. These systems facilitate policy-driven data placement, automated tiering, and real-time reconfiguration, often utilizing commodity hardware or cloud resources. Integrating SDS with persistent memory and NVMe-over-Fabrics further accelerates performance, enabling near-instantaneous data access and seamless scalability across geographically dispersed data centers. As organizations seek agile and cost-effective storage solutions, mastering SDS architectures is vital for future-proof data infrastructure design.

How Do Emerging Technologies Influence Data Resilience Strategies in Hybrid Cloud Environments?

Emerging storage technologies, including persistent memory, NVMe-oF, and hyper-converged infrastructure, are reshaping resilience strategies in hybrid cloud ecosystems. Persistent memory offers ultra-low latency and durability, allowing critical data to be stored close to processing units, reducing dependency on traditional RAID. NVMe-over-Fabrics extends high-speed access over networks, enabling distributed RAID-like redundancy across data centers. Hyper-converged systems integrate compute, storage, and networking, simplifying management and improving fault tolerance at scale. These innovations facilitate robust disaster recovery models, seamless workload mobility, and dynamic resource allocation, essential for maintaining data integrity and availability in complex hybrid environments. For organizations navigating this landscape, strategic deployment of these technologies can significantly enhance overall resilience and performance.

What Role Do Hardware Accelerators Play in Enhancing RAID Performance?

Hardware accelerators, such as FPGAs and specialized RAID controllers with built-in processing cores, are instrumental in offloading complex parity calculations and error correction tasks. By executing these computationally intensive operations in dedicated hardware, systems can achieve higher throughput and lower latency, especially when handling large-scale, high-transaction workloads. This acceleration is particularly beneficial in NVMe-based RAID arrays and enterprise storage solutions where performance bottlenecks impede operational efficiency. Integrating hardware accelerators requires careful system design to optimize data paths and ensure compatibility with existing infrastructure. As storage demands escalate, leveraging these accelerators becomes a strategic priority for data centers aiming to maximize performance without compromising fault tolerance.

How Can You Leverage Cloud-Native Storage Solutions for Enhanced Data Redundancy?

Cloud-native storage solutions, such as object storage with erasure coding and geo-replication, complement traditional RAID by offering flexible, scalable redundancy across distributed environments. These solutions utilize advanced algorithms to split data into fragments, distribute them across multiple nodes, and reconstruct data even in the event of multiple failures. Integrating these with on-premises RAID arrays creates a hybrid resilience model, enabling rapid recovery, disaster mitigation, and seamless data mobility. Cloud-native tools also facilitate policy-driven automation, dynamic provisioning, and cost-effective scaling, making them indispensable in modern data architectures. Organizations seeking to future-proof their data resilience should explore how hybrid approaches leveraging both RAID and cloud-native solutions can deliver optimal results.

Frequently Asked Questions (FAQ)

What are the main types of RAID configurations and their primary use cases?

RAID configurations include RAID 0, RAID 1, RAID 5, RAID 6, RAID 10, and more complex levels like RAID 50 and RAID 60. RAID 0 offers enhanced performance through striping but no redundancy, making it suitable for non-critical high-speed tasks. RAID 1 mirrors data for fault tolerance, ideal for critical data. RAID 5 and 6 provide a balance of redundancy and storage efficiency using parity, suitable for enterprise environments. RAID 10 combines mirroring and striping for high performance and reliability, perfect for transactional systems requiring speed and data integrity.

How does software-defined RAID differ from traditional hardware RAID?

Software-defined RAID abstracts RAID management from hardware controllers, relying on software layers to handle redundancy and performance. This approach offers greater flexibility, scalability, and cost-effectiveness, enabling dynamic reconfiguration and integration with modern storage architectures like SDS (Software-Defined Storage). Hardware RAID uses dedicated controllers for managing disks, often providing better performance and dedicated features, but less flexibility. The choice depends on workload needs, scalability requirements, and budget.

What emerging technologies are influencing the future of RAID and data redundancy?

Emerging technologies such as persistent memory (e.g., Intel Optane), NVMe over Fabrics (NVMe-oF), and machine learning-driven predictive analytics are revolutionizing data redundancy and performance. Persistent memory offers near-instant data recovery, while NVMe-oF enables distributed, high-speed storage across networks. Machine learning models predict disk failures, allowing preemptive maintenance. These innovations are pushing the boundaries of traditional RAID, leading toward hybrid architectures that combine hardware, software, and emerging memory technologies for superior resilience and speed.

What are the best practices for maintaining RAID arrays to ensure data integrity?

Regular monitoring of disk health via SMART metrics, implementing hot spares, and routine testing are essential. Keeping firmware and drivers updated ensures compatibility and reliability. Using redundant power supplies and environmental controls minimizes physical risks. Periodic rebuilds and consistency checks prevent silent data corruption. Integrating monitoring tools with predictive analytics can preempt failures. Backups should complement RAID to safeguard against catastrophic failures. These practices collectively sustain optimal RAID performance and data integrity.

Can RAID be effectively integrated with cloud storage solutions?

Yes, hybrid architectures combining on-premises RAID arrays with cloud storage offer enhanced resilience, scalability, and disaster recovery. Local RAID provides fast access and redundancy, while cloud backups and replication ensure off-site protection. Cloud-native storage services with erasure coding and geo-replication further strengthen data resilience. This integration enables seamless data mobility, rapid recovery, and cost-effective growth. Proper synchronization, security, and latency management are crucial for effective hybrid systems. This approach leverages the strengths of both local and cloud storage for comprehensive data protection.

What role does machine learning play in optimizing RAID management?

Machine learning enhances RAID management by predicting disk failures through analysis of SMART data, temperature, and performance logs. These models enable proactive replacements, reducing downtime and data loss risk. ML-driven analytics help optimize rebuild strategies and resource allocation. Integrating AI tools into storage management systems provides continuous health assessment, anomaly detection, and automated responses. This predictive approach improves reliability, extends hardware lifespan, and reduces operational costs, making RAID management more intelligent and resilient.

How do advanced parity algorithms improve RAID fault tolerance?

Advanced parity algorithms like Reed-Solomon and XOR-based codes enhance fault tolerance by enabling data recovery from multiple disk failures with minimal overhead. These algorithms perform complex error correction, ensuring data integrity even in degraded states. Hardware accelerators and optimized software implementations enable efficient computation, maintaining high performance. They are vital for high-demand environments like scientific computing and financial services, where data resilience is critical. These algorithms extend the capabilities of traditional RAID, offering higher fault tolerance and improved data protection.

What is the impact of emerging storage paradigms like persistent memory on traditional RAID strategies?

Persistent memory blurs the line between volatile RAM and persistent storage, enabling near-instantaneous data recovery and reducing reliance on traditional RAID for certain applications. It facilitates ultra-low latency tiered storage, complementing or replacing some RAID functions. NVMe over Fabrics allows distributed, high-speed access across networks, creating alternative redundancy models. These technologies enable hybrid architectures that combine persistent memory, NVMe-oF, and traditional RAID for optimized performance and resilience, especially in real-time analytics and transactional systems.

What is the significance of hardware accelerators in modern RAID systems?

Hardware accelerators like FPGAs and dedicated RAID controllers offload intensive parity calculations and error correction tasks, significantly boosting throughput and reducing latency. They are particularly beneficial in large-scale, high-transaction environments such as data centers and enterprise storage. By handling complex computations in dedicated hardware, they free CPU resources and improve overall system performance. Integrating these accelerators requires careful system design but yields substantial gains in speed and fault tolerance, fulfilling the demands of modern data-intensive workloads.

How can organizations optimize RAID configurations for mixed workloads with varying latency and throughput needs?

Optimization involves selecting appropriate RAID levels for specific workloads—using NVMe RAID arrays for latency-sensitive applications and higher-capacity SATA RAID for archival storage. Hybrid caching solutions, such as SSD caches in larger arrays, can accelerate access for critical data. Dynamic reconfiguration via software-defined storage allows real-time adaptation to workload shifts. Combining multiple RAID levels or employing tiered storage strategies ensures performance and resilience are balanced according to operational requirements.

Trusted External Sources

- Gartner Research: Provides insights on storage trends, including hybrid RAID architectures and emerging technologies, useful for understanding industry directions and forecasts.

- Seagate Technical Resources: Offers detailed technical explanations of RAID implementations, error correction algorithms, and storage management best practices, valuable for deep technical understanding.

- IEEE Transactions on Computers: Publishes scholarly articles on error correction algorithms, fault tolerance, and storage system innovations, ensuring access to cutting-edge research.

- Intel Optane Documentation: Details on persistent memory technology, outlining how it integrates with storage architectures and impacts data resilience strategies.

- National Institute of Standards and Technology (NIST): Provides standards and guidelines on data integrity, error correction, and storage security, ensuring compliance and best practices.

Conclusion: Final Expert Takeaway

Understanding RAID storage’s evolving landscape is essential for designing resilient, high-performance data infrastructures capable of meeting modern demands. From traditional configurations to innovative hybrid architectures incorporating emerging technologies like persistent memory and NVMe-oF, the key lies in strategic selection, continuous monitoring, and leveraging intelligent management tools. As data volumes grow exponentially, future-proofing your storage systems through a combination of robust RAID setups, software-defined solutions, and emerging hardware accelerators will be critical. Embracing these advancements ensures your data remains accessible, protected, and primed for innovation. Share your insights, comment with your experiences, or explore related expert content to stay at the forefront of data storage mastery.