Mastering High-Performance Storage Architectures for Critical Data Workflows

The rapidly evolving landscape of storage technology demands an intricate understanding of the core principles governing data reliability, speed, and scalability. As professionals increasingly rely on NVMe SSDs, particularly within RAID 0 configurations, it’s essential to unravel the complexities that lead to system crashes and data integrity issues. This article explores the nuanced interplay between NVMe technology, RAID configurations, and the importance of optimized scratch disks in high-demand environments.

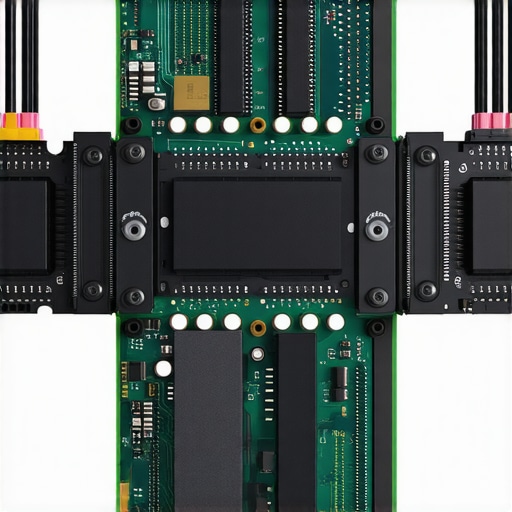

Deciphering the Risks of RAID 0 with NVMe SSDs in Demanding Environments

While RAID 0 offers unparalleled speed by striping data across multiple NVMe drives, it inherently sacrifices redundancy. The high throughput of NVMe SSDs amplifies this trade-off, making the entire array susceptible to catastrophic failure upon a single drive malfunction. In professional contexts such as real-time data analytics or 4K video editing, this vulnerability can result in system crashes that compromise workflow integrity.

How Firmware and Driver Optimization Mitigate RAID Crashes

Advanced firmware updates and stable driver support are critical in ensuring synchronized operation between NVMe controllers and host systems. Proper configuration, including selecting enterprise-grade NVMe SSDs with robust firmware, reduces erroneous drive resets and timeouts. Leveraging technologies such as end-to-end data protection and command queuing further fortifies the system against crashes.

Is Your Scratch Disk Setup Contributing to Stability Issues

Scratch disks serve as vital temporary storage for creative applications, but their improper configuration can introduce instability in RAID arrays. Optimizing scratch disk performance by allocating dedicated external SSDs or SATA SSDs, coupled with suitable RAID levels—such as RAID 10 for balanced speed and redundancy—can significantly enhance system stability. Consult our scratch disk optimization guide for detailed strategies.

What are the primary causes of NVMe RAID crashes during intensive write operations?

Common culprits include firmware incompatibilities, insufficient power supply stability, outdated drivers, and improper RAID controller settings. High-speed data transfers push the limits of thermal thresholds and controller throughput, emphasizing the need for diligent system monitoring and regular firmware updates. Refer to authoritative research on SSD firmware resilience at academic publications for deeper insights.

Incorporating enterprise-grade hardware, maintaining meticulous firmware management, and designing optimized scratch disk workflows are fundamental to preventing system crashes. Engage with professional communities or consult specialized documentation to tailor configurations for your specific operational demands.

Explore more advanced topics or share your experiences with NVMe RAID implementations by visiting our contact page.

Transforming Storage Speed into Reliability

While the pursuit of blistering data transfer rates drives many towards NVMe SSDs configured in RAID arrays, ensuring data integrity amidst uncompromising performance remains a pivotal challenge for professionals. Balancing speed and redundancy requires not just hardware selection but also strategic configuration that anticipates potential pitfalls.

The Myth of Maxed-Out Speed and Overlooked Risks

It’s tempting to believe that stacking multiple NVMe drives in RAID 0 will deliver unrivaled performance for intensive workflows such as 8K video editing or large-scale data analysis. However, this setup often underestimates the impact of several factors like firmware stability, motherboard compatibility, and power management. Overlooking these elements can lead to unpredictable crashes, corrupted files, or silent data loss, especially during long burst transfers.

Layering Resilience into Lightning-Fast Storage

One effective approach is adopting RAID configurations that prioritize redundancy, such as RAID 10, which offers a compromise between speed and safety. Additionally, integrating enterprise-grade NVMe SSDs with advanced firmware supporting end-to-end data protection can significantly reduce crash likelihoods. Combining these with a robust backup strategy anchors high-performance storage in a foundation of reliability. For comprehensive guidance, visit our RAID storage overview.

Implementing Proactive Monitoring for Peace of Mind

Monitoring tools that track drive temperature, error logs, and controller health are vital in preempting storage failures. Embedding these diagnostics within your storage environment empowers proactive interventions before crashes impact productivity. Today’s enterprise tools can integrate with system firmware to automatically alert administrators, ensuring minimal downtime.

Are your current RAID strategies truly safeguarding against unseen vulnerabilities in lightning-fast environments?

Questioning established configurations is essential for continuous improvement. In complex storage architectures, even minute misalignments or firmware discrepancies can cascade into significant failures. Consistent testing, firmware updates, and evolving configurations aligned with hardware advancements are the pillars of a resilient high-speed storage system. Trusted insights, like those from industry experts, reinforce the importance of dynamic, well-informed configurations.

Enhance your storage setup by exploring detailed case studies or engaging with community discussions to exchange best practices. Our external SSD strategies can provide additional actionable insights.

Leverage Advanced Firmware Strategies to Fortify Data Pathways

In high-performance environments, firmware acts as the gatekeeper of NVMe SSD stability and efficiency. Implementing custom firmware with optimized power management and error correction features can significantly reduce crash incidences during intensive write cycles. Transitioning to firmware that supports features like volatile write caching and autonomous recovery algorithms ensures smoother data flow, especially under sustained workloads. Keep abreast of firmware releases from your SSD vendor and rigorously test updates in controlled environments before deployment to prevent unforeseen incompatibilities.

How can firmware-level optimizations mitigate thermal throttling and sustain peak writing speeds?

Firmware that integrates adaptive thermal management algorithms can dynamically adjust drive performance to prevent overheating without sacrificing too much throughput. Studies such as those published in the IEEE Transactions on Computers illustrate that firmware-based thermal throttling algorithms, when finely tuned, provide a balanced approach to maintaining speed while safeguarding hardware longevity. This approach minimizes unexpected shutdowns during prolonged high-IO operations, ensuring sustained data throughput and system resilience.

Designing a Proactive Monitoring Ecosystem for Preventative Maintenance

Implementing continuous monitoring solutions that integrate SMART diagnostics, temperature sensors, and real-time error logging allows administrators to anticipate storage failures before they occur. Advanced monitoring dashboards utilizing predictive analytics can analyze patterns in drive behavior—such as increased error rates or thermal anomalies—and trigger automated alerts or preemptive data migrations. For organizations managing large-scale NVMe arrays, such systems are critical for minimizing downtime and maintaining data integrity.

What role do machine learning models play in predictive storage failure analysis?

Emerging research, highlighted in systems reliability journals, shows that machine learning algorithms can process vast datasets of drive telemetry to identify subtle warning signs that precede hardware failures. By training models on extensive historical failure data, IT teams can develop predictive maintenance schedules that preemptively replace or repair problematic drives. Integrating these models with existing monitoring tools elevates storage management from reactive to proactive, ultimately reducing crash incidents and enhancing overall system robustness.

![NVMe RAID 0: Why your storage array keeps crashing [Fixed]](https://storage.workstationwizard.com/wp-content/uploads/2026/01/NVMe-RAID-0-Why-your-storage-array-keeps-crashing-Fixed.jpeg)

This article offers a comprehensive overview of the critical factors influencing NVMe RAID stability, especially in demanding environments like video editing or data analytics. I’ve personally experienced system crashes due to overlooked firmware updates and thermal management issues when pushing high-speed storage arrays. The emphasis on proactive monitoring and tailored firmware solutions really resonates with my approach to managing storage infrastructure. In my experience, integrating real-time temperature sensors and predictive analytics has significantly reduced unexpected failures. However, I wonder about the practical challenges of deploying machine learning models in smaller teams or organizations with limited resources. How have others balanced advanced monitoring with limited budgets? Also, do you have recommendations for low-cost yet effective cooling techniques to prevent thermal throttling during continuous high-speed data transfers? Looking forward to hearing different strategies that have worked in real-world scenarios.